This is an article in a multipart series on the concepts of ray tracing. I am not sure where this will lead but I am open to suggestions. We will be creating code that will run inside Blender. Blender has ray tracing renderers of course but that is not the point: by reusing Python libraries and Blender's scene building capabilities we can concentrate on true ray tracing issues like shader models, lighting, etc.

I generally present stuff in a back-to-front manner: first an article with some (well commented) code and images of the results, then one or more articles discussing the concepts. The idea is that this encourages you to experiment and have a look at the code yourself before being introduced to theory. How well this works out we will see :-)

So far the series consists of the several articles labeled ray tracing concepts

I generally present stuff in a back-to-front manner: first an article with some (well commented) code and images of the results, then one or more articles discussing the concepts. The idea is that this encourages you to experiment and have a look at the code yourself before being introduced to theory. How well this works out we will see :-)

So far the series consists of the several articles labeled ray tracing concepts

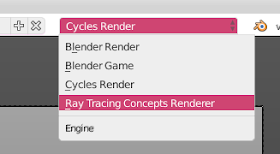

The code presented in the first article of this series was a bit of a hack: running from the text editor and lots of built-in assumptions is not the way to go so lets refactor this in a proper render engine that will be available alongside Blender's built-in renderers:

A RenderEngine

All we really have to do is to derive a class from Blender's RenderEngine class and register it.The class should provide a single methodrender() that takes a Scene parameter and returns a buffer with RGBA pixel values.

class CustomRenderEngine(bpy.types.RenderEngine):

bl_idname = "ray_tracer"

bl_label = "Ray Tracing Concepts Renderer"

bl_use_preview = True

def render(self, scene):

scale = scene.render.resolution_percentage / 100.0

self.size_x = int(scene.render.resolution_x * scale)

self.size_y = int(scene.render.resolution_y * scale)

if self.is_preview: # we might differentiate later

pass # for now ignore completely

else:

self.render_scene(scene)

def render_scene(self, scene):

buf = ray_trace(scene, self.size_x, self.size_y)

buf.shape = -1,4

# Here we write the pixel values to the RenderResult

result = self.begin_result(0, 0, self.size_x, self.size_y)

layer = result.layers[0].passes["Combined"]

layer.rect = buf.tolist()

self.end_result(result)

Option panels

For a custom render engine all panels in the render and material options will be hidden by default. This makes sense because not all render engines use the same options. We are interested in just the dimensions of the image we have to render and the diffuse color of any material so we explicitly add our render engine to the list ofCOMPAT_ENGINES in each of those panels, along with the basic render buttons and material slot list.

def register():

bpy.utils.register_module(__name__)

from bl_ui import (

properties_render,

properties_material,

)

properties_render.RENDER_PT_render.COMPAT_ENGINES.add(CustomRenderEngine.bl_idname)

properties_render.RENDER_PT_dimensions.COMPAT_ENGINES.add(CustomRenderEngine.bl_idname)

properties_material.MATERIAL_PT_context_material.COMPAT_ENGINES.add(CustomRenderEngine.bl_idname)

properties_material.MATERIAL_PT_diffuse.COMPAT_ENGINES.add(CustomRenderEngine.bl_idname)

def unregister():

bpy.utils.unregister_module(__name__)

from bl_ui import (

properties_render,

properties_material,

)

properties_render.RENDER_PT_render.COMPAT_ENGINES.remove(CustomRenderEngine.bl_idname)

properties_render.RENDER_PT_dimensions.COMPAT_ENGINES.remove(CustomRenderEngine.bl_idname)

properties_material.MATERIAL_PT_context_material.COMPAT_ENGINES.remove(CustomRenderEngine.bl_idname)

properties_material.MATERIAL_PT_diffuse.COMPAT_ENGINES.remove(CustomRenderEngine.bl_idname)

reusing the ray tracing code

Our previous ray tracing code is adapted to use the height and width arguments instead of arbitrary constants:

def ray_trace(scene, width, height):

lamps = [ob for ob in scene.objects if ob.type == 'LAMP']

intensity = 10 # intensity for all lamps

eps = 1e-5 # small offset to prevent self intersection for secondary rays

# create a buffer to store the calculated intensities

buf = np.ones(width*height*4)

buf.shape = height,width,4

# the location of our virtual camera (we do NOT use any camera that might be present)

origin = (8,0,0)

aspectratio = height/width

# loop over all pixels once (no multisampling)

for y in range(height):

yscreen = ((y-(height/2))/height) * aspectratio

for x in range(width):

xscreen = (x-(width/2))/width

# get the direction. camera points in -x direction, FOV = approx asin(1/8) = 7 degrees

dir = (-1, xscreen, yscreen)

# cast a ray into the scene

... indentical code omitted ...

return buf

No comments:

Post a Comment